TRANSCRIPT:

INTRO:

Hi, I'm Chris Wu, and this is ERNE, an emotive robot which I designed and built as a personal project. I'm interested in exploring the various ways in which people can interface with machines. Typically, machines communicate information through screens and monitors: they serve as the medium for human-human interactions. However, in this project, I wanted to see if I could create a machine that communicates not information, but emotion.

TECH SPECS/CONTENT:

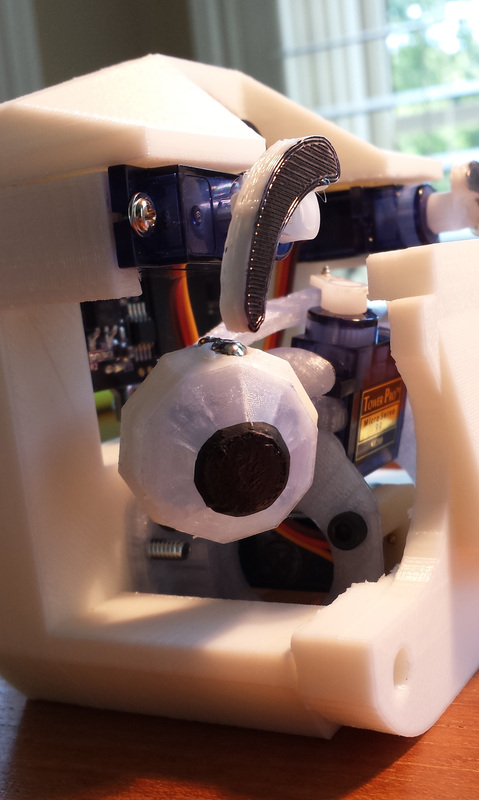

ERNE is the product of over 300 hours of sketching, prototyping, modeling, 3D printing, and programming, and is made of parts and supplies either scavenged, custom designed or purchased at a hobby electronics supplier. (SHOW SHOTS OF EACH PROCESS)

All of ERNE's major hardware components were 3D-printed with an Ultimaker 2 and a self-built Printrbot RepRap. (PRINTERS IN ACTION)

ERNE features 2 RC servos that give him a full range of head movement, as well as 10 facial servos that allow him to express a range of emotions: happy, sad, angry, bored, skeptical, devious, surprised, imaginative...as well as some emotions in-between (SHOW EMOTIONS)

At the heart of ERNE's functionality is the Pixy CMUCam5, a smart, microcontroller-friendly color camera released on kickstarter last year. It can be configured to recognize 7 distinct color signatures, as well as various color-combinations.

BEHAVIORS:

ERNE's versatile movement capabilities, combined with the Pixy's abilities as a color sensor open up many possibilities for behaviors. Here are a few samples:

In this demo, ERNE tracks a colored object, smiling when he sees it in his field of view...and frowning when it goes away. This is similar to the way in which an infant might smile when he sees his favorite toy, then cry when it's abruptly snatched from him.

In this demo, ERNE demonstrates the ability to differentiate different color-combinations: he smiles when he sees a red-yellow-blue combo, frowns when he sees a yellow-blue-red combo, and gets angry and upset when he sees a blue-red-yellow combo. ERNE displays different emotions based on minute changes in visual stimuli: a crude simulation of how a toddler would associate different objects with different meanings -- a stepping-stone to more complex visual-cognitive abilities, like reading.

Finally, I've worked on implementing voice recognition to expand ERNE's interactivity, and have already written code snippets to demonstrate basic VR functionality…

RESULTS/PUBLICITY:

In conclusion, creating ERNE has been both intellectually stimulating and rewarding. Last December, an article I wrote on ERNE was published in Robot, an internationally distributed magazine on science, gadgets, and robotics with thousands of subscribers. I was also invited to attend HRI 2015: an international research conference in Portland, Oregon, where I gained exposure to some of the latest robotics and artificial intelligence research done by institutions from around the world. Finally, all of ERNE's hardware is open source: all the 3D CAD files necessary to 3D print your own ERNE are online at "thingiverse.com", and as of May, 2015, ERNE has received over 400 downloads! I'm humbled by the doors this project has opened, and the unique experience of building a robot that is able to….

RSS Feed

RSS Feed